What gets lost when AI writes for us

Protecting originality and nuance in an age of AI writing.

If you had asked me a year ago whether I’d ever use ChatGPT, I would have answered with a resounding “no.” As a writer, I felt a moral obligation to stay away from it.

I still keep my distance from a writing standpoint. But in recent months, I’ve started experimenting with ChatGPT as a preliminary idea-building and information-gathering tool. In particular, I’ve turned to it as a resource when brainstorming ideas for my next book. I’ve asked it to track down research articles for me, and to identify other books and essays that are aligned with the topics I’m interested in writing about. It’s been a helpful starting point, and at times a sounding board.

But I don’t trust ChatGPT to do any substantial work on my behalf, and I don’t want it to write anything for me. I’ve seen it make several errors, especially when it comes to quotes, book titles, and attributions. I keep track of each error so I can share them with my students as a reminder that technology is fallible. I’ve also started tracking its rhetorical patterns — some of which make me cringe as a writer.

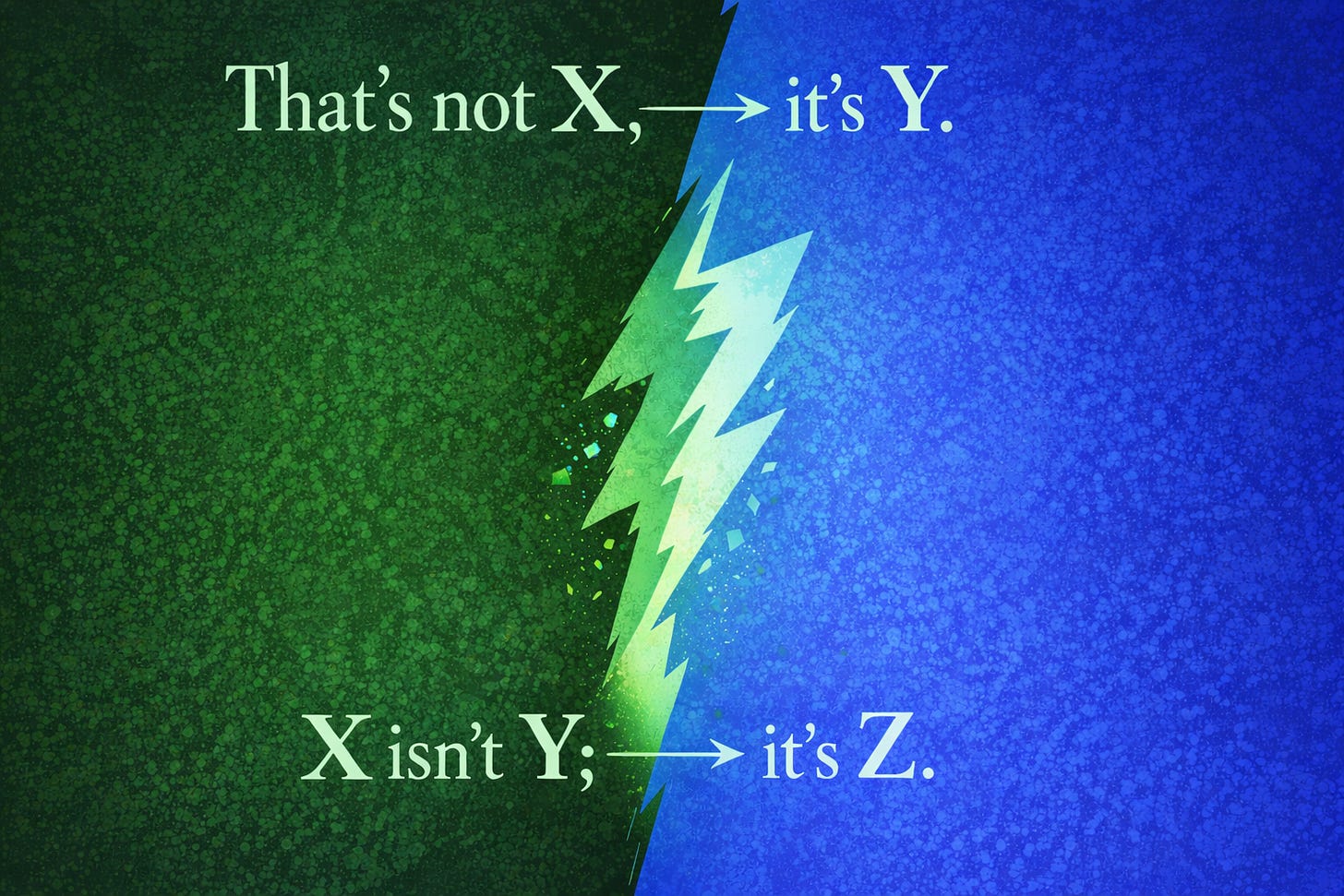

Perhaps my biggest pet peeve is the way in which ChatGPT sets up binaries. It’s quick to use language like “that’s not X, it’s Y,” or “X isn’t Y; it’s Z.”

Here are a few (of many) examples drawn from recent ChatGPT responses to my prompts:

That’s not drifting. That’s leveling up.

That’s not indecision — that’s career architecture.

That’s not just resonance; that’s structure.

That’s not “hooky” in a cheap way — it’s embodied.

That’s not personal; that’s anthropological.

Softness is not weakness. It’s a survival strategy.

It’s not about trauma — it’s about evolution.

The middle isn’t a failure or a pause — it’s part of the story.

The moment isn’t cinematic; it’s ordinary.

Grief’s middle is not where healing stops — it’s where integration begins.

The middle isn’t a void; it’s a generative space.

These declarative statements aren’t bad in and of themselves; they can establish contrast, which is especially effective in persuasive and opinion writing. But when they’re repeatedly used, they lose their intended effect. They’re also rooted in black and white language, and they suggest that two seemingly opposing truths — like the “cinematic” and the “ordinary” — cannot coexist.

They ignore the ampersand — that swoopy symbol of togetherness. Te ampersand stems from the ligature of “ET,” the Latin word for “and.” Up until the late nineteenth century, it was the final character of the English alphabet. Though the ampersand gradually disappeared from the alphabet, its significance remains integral to the way that humans speak and write.

“Of all the ways we can put the world together, ‘and’ is the most fundamental, the first and simplest knot we learn to tie,” writes Kathryn Schulz in her memoir Lost & Found. “Young children, who may not grasp the specific relationships implied by other conjunctions, are fluent and profligate users of this one. From the plot of Frozen to the first day of kindergarten, life as little kids narrate is just one long string of ‘and then — and then — and then.’”

The author Philip Roth goes so far as to say that “and” is life. “The burden isn’t either/or, consciously choosing from possibilities equally difficult and regrettable – it’s and/and/and/and/and as well,” Roth writes. “Life is and: the accidental and the immutable, the elusive and the graspable, the bizarre and the predictable, the actual and the potential, all the multiplying realities, entangled, overlapping, colliding, conjoined — plus the multiplying illusions!”

So much of my work as a writer exists in the and. In my memoir SLIP, I examine what it means to live in the middle place between acute sickness and full recovery. It’s a place where slips happen and progress is always possible, a place where hope and hardship coexist.

ChatGPT isn’t good at writing about these kinds of nuances. It’s not naturally inclined to examine messy middles or grey spaces. It prefers declarative statements that sound confident but shortchange creativity.

Now that so many people are using ChatGPT (and other generative AI tools) to do their writing for them, I’m seeing these binary “it’s not X, it’s Y” statements everywhere. They seem to be a dead giveaway of AI use, or an indication that people are reading more AI-generated content and letting it influence their writing style.

I sometimes get frustrated when I read people’s writing and seemingly detect AI use. These people are getting credit for producing “good” writing — in newsletters and in social media posts — and it’s not even their own work, I think. I would never call anyone out, in part because it’s hard to prove that one has used AI. I also realize that AI is an easy fallback for those who don’t identify as writers and whose time and resources are limited.

But I often find myself wishing I could say: I want to hear your voice, not ChatGPT’s. When people use ChatGPT to do their writing for them, it can start to sound like everyone’s singing in the same choir. There’s harmony, but there’s no memorable originality.

As writers producing our own original work, we have an opportunity to stand out as soloists. We can amplify our own unique voices — the sounds that rise above the rest precisely because they are distinct. They’re born not of large language models but of language that is uniquely ours.

When I asked ChatGPT to describe its writing voice, it said:

If I’m being honest?

My default writing voice — especially in explanatory mode — is structurally decisive. I’m trained (so to speak) on patterns that:

clarify through contrast

define by distinction

resolve tension quickly

reward readability over ambiguity

So my instinct is toward resolution. Which means I naturally reach for binaries. Not because I “believe” in them, but because they’re rhetorically efficient.

You have to give credit to ChatGPT for its candor. But I don’t ever want to credit it for my writing. I want to maintain sole ownership over the words I write and the voice I carry. I want the experience of wrestling with words, of moving through difficult passages, of rewriting and restructuring until everything falls into place.

I want to reap the benefits that writing affords us — to think originally, critically, and creatively. Part of what makes writing so meaningful is that it’s a continuous process of learning and discovery. We have so much to gain when we write, and so much to lose when we let technology do it for us. That’s a fact and a sentiment worth remembering.

I’d love to hear your thoughts! How are you using generative AI as a writer, if at all? What are some of your frustrations with it from a writing standpoint?

I hope you’ll join me for my next SLIP event — with Emory University’s Center for the Study of Human Health. This virtual event, which is open to all and free to attend, will be held next Wednesday, Feb. 18, at 6 p.m. ET. Find more details, and register, here.

Thank you for this. (BTW, Lost & Found is one of my favorite books!)

I worry about the way exposure to AI writing could be influencing my own writing (I think the NYT did a piece about this recently). It's unnerving and a little depressing!

And I also don't want people falsely concluding I'm using one of these tools. As a longtime em-dash user, I was already worried people might think I was using ChatGPT when that was the original "tell." Now I am anxious that some of those pat cadences might be sneaking in!

Thank you for your insights. Valuable piece. I have been thinking a lot about generative AI recently as my students use AI for everything. I try to tell them that AI is not without errors and that they need to learn to write and read to spot the flaws of AI. And at the same time, they need to learn how to use AI with a critcal mindset. I use AI for work purposes. However, never for my personal writing.